Have you seen the movie Minority Report with Tom Cruise? That’s immediately what I think of every time I hear the word “intent.”

It’s one of the most mind-bending movies I’ve ever seen.¹ You should watch it if you haven’t.

Spoiler alert: it’s about a concept called “pre-crime.” Tom Cruise goes around arresting people for murders they haven't committed yet. The plot twist happens when Tom Cruise gets predicted as a future murderer himself, then goes on the run to prove the system wrong.

Aside from being entertaining, it’s a moral debate about the ethics of intent versus action. It’s easy to think it’s always ethical to stop heinous crimes like murder before they happen, but reality is far more complicated.

Intent isn't some dystopian future we should be afraid of, though. It's something we already do naturally without thinking about it. We’ve been evaluating intent for 200,000 years.

You read a room and sense hostility before anything is said. You slow down when a car pulls out of a driveway even though nothing has happened yet.

On a behavioral level, you know exactly what I’m talking about when I say “intent.”

The irony is most of us have always assumed intent is impossible in security.

Something shifted recently. I’m seeing “intent” talked about everywhere now. It’s a fascinating trend.

Intent being the topic du jour in cybersecurity is a recent thing, but some teams have been working on this problem for a long time.

The DTEX co-founders have been researching and building products around intent for almost two decades. DTEX as a company has been building intent-based products since 2015. I turned to them first to help me understand why intent matters and, more importantly, how we need to execute it this time around.

This article is a combination of my own thoughts and research around intent in security, plus decades of accumulated knowledge experience from the DTEX team.

Here’s what I’m now certain of after doing this research: we're going to see an inflection point for intent in security.

This inflection point could turn out well or poorly. We’re standing at the crossroads right now. I think it’s our obligation as security professionals to lean into intent and execute it well.

First, let’s ground ourselves and talk about what intent means in the context of cybersecurity in the first place.

What intent means in security

Intent is the why behind an action. It’s the motivation driving a human or AI agent toward a particular outcome.

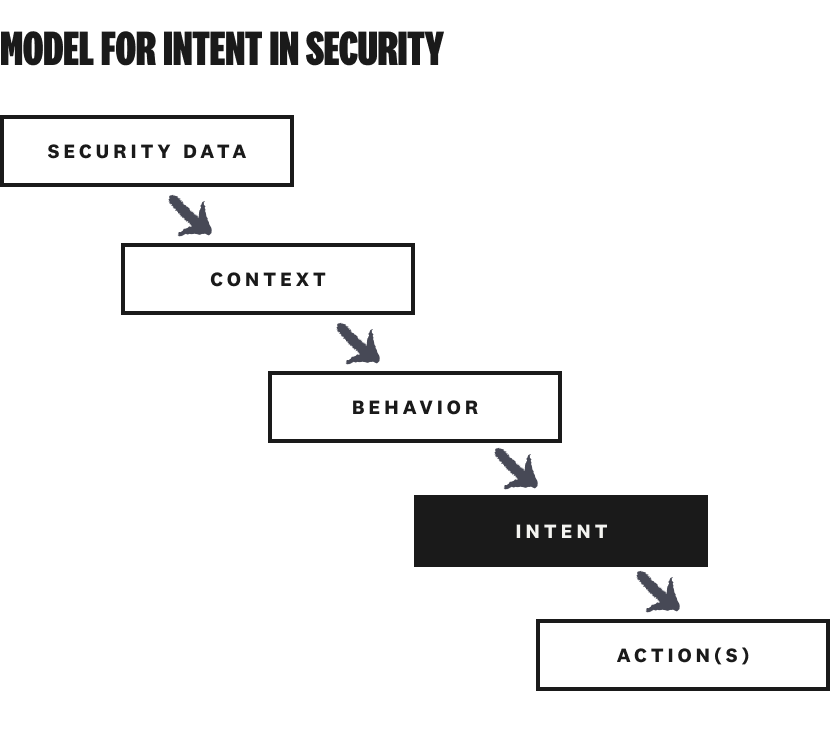

Security products have spent two decades getting very good at detecting what happened across multiple layers of the technology stack (a file moved, a credential was used, an API was called). Raw log data is a starting point, but logs alone aren’t enough. Detecting “what happened” occurs after the action. Understanding intent helps to get ahead of an action.

We’ve gotten a lot better at context (who, when, from where), too. Context starts to aggregate and curate security data into something more useful and consumable by both human security professionals and autonomous agents performing analysis.

What we’re usually trying to boil this information down to is bad behavior. Something happened in our environment…now what?

Context and behavior are helpful, but intent and action are really what we’re after.

Intent is the next layer up. Given what happened and the context, what was this person (or agent) actually trying to accomplish?

If we know the context, behavior, and intent, the action we need to take is probably clear.

A critical point in this whole discussion is that nobody truly knows intent. Even DTEX, which has built its entire platform around this concept, frames it carefully. They infer intent from behavioral evidence rather than claiming to read minds.

Bigger picture, understanding intent is the holy grail for human intel professionals. They will be the first to tell you just how elusive it is.

The definition of intent is worth being specific about. It’s very easy to say a security product has “intent” as a feature or outcome without saying how. But the “how” part matters a lot.

Tactically, the most accurate way we’ve found yet to analyze behavior across the full spectrum of activity: what happened before the trigger event, what happened during, what happened after.

Most security products only see the trigger itself, which is why they can show what happened at a fairly atomic level (and feed this information into a broader set of context, like a SIEM) but usually not why.

Next, let’s talk about why AI and agents make intent both more possible and more necessary than ever.

Why intent, and why now

Here’s the punch line: all the ingredients for using intent in security have finally come together.

The current wave of AI (LLMs, agents, and the rest) has given us several technical ingredients that are either new or significantly more powerful than what we had at our disposal earlier.

Models and reasoning are faster, cheaper, and more powerful. Context windows are expanding. Integrations and tool calling are broader and more capable than ever. Harnesses added another layer of utility on top of models alone.

You get the idea. The technical ingredients alone aren't the story, though.

I’ve worked in security long enough to know that technology alone isn’t enough for major changes to break through. You need a burning platform.

The burning platform for intent is AI agents.

Agents are hitting their own inflection point right now…and bringing consequential security implications along as baggage.

Agents operate with real access to real systems. When an agent exfiltrates data or executes a destructive action, the question "was that intentional?" has enormous stakes for response, liability, and remediation.

We’re going to start taking intent seriously because we have no other choice.

The techniques and tools we’ve used for security in the past are still useful, but they’re almost entirely deterministic.

For example, behavioral analytics delivered alerts without the "so what." You'd get a notification that someone accessed files outside their normal pattern, and you still had no idea whether that person was about to do something harmful or just helping a colleague while they were out.

That’s not even the worst part. A 2021 MITRE study found that existing UEBA tools caught fewer than 5% of malicious insiders. False positives are annoying, but complete misses can be catastrophic.

The gap between what we hoped behavioral analytics (and deterministic approaches at large) could do and what they actually delivered was enormous.

The signal was real, but the meaning was missing. Intent is our path from signal to meaning.

So far, I’ve given you the (mostly) good news about intent now being both possible and necessary. The bad news is that actually making intent usable is harder than it looks. Let’s talk about that next.

Why intent is harder than it looks

A lot of the current discussion around intent is just words. Words alone are unsatisfying for me in a situation like this. I want to understand the implementation of intent in day-to-day security work.²

To understand where intent is going, you have to understand where it’s been. Understanding the long, winding road of research around intent in security helps you appreciate how difficult implementation really is.

In some ways, we’ve been here before. In the early 2010s, people thought sentiment analysis was going to solve everything — read people's emails, analyze their mood, and catch disgruntled employees before they did something bad.

It didn't work because sentiment is too surface-level. It can’t read minds. It's also too easy to game, too easy to misinterpret, and too contentious from a legal standpoint.

As a result, the field split into two research paths.

One path went psychosocial. The approach was to build psychological profiles and understand the motivations and stressors that precede insider threat activity. This is real science, and it informs a lot of modern insider risk programs. But it's slow and hard to operationalize at scale.

The other path went data-driven. It was built around MITRE's behavioral sciences work. Instead of trying to understand why someone might act, this research focused on which behavior sequences actually precede bad outcomes.

This path had a breakthrough. You can’t look for anomalies in isolation. You have to look for sequences of actions, co-occurring behaviors, and combinations that only appear together when something is actually wrong.

There's an important caveat here, though: anomaly detection isn't intent. It's a prerequisite, but over-emphasizing it causes massive false positive rates. We all know how that story ends.

The second path is closer to what's actually working today (and what DTEX uses to protect critical government and commercial enterprises). The magic they found is a balance of deterministic and non-deterministic techniques that turn anomaly detection into intent. This balance is where things get really interesting.

A deterministic model (with rules, logic, and hardcoded behavioral sequences) gives you precision and explainability.

A non-deterministic model (an LLM reasoning over context) gives you flexibility and the ability to synthesize ambiguous signals.

Neither alone is enough. That’s why intent is harder than it looks.

The trap I’m seeing early stage companies walk into is relying exclusively on non-deterministic approaches to reach overly confident conclusions about “intent” (air quotes). That’s not intent, at least not at the level of rigor required for security.

A logical counter is that non-deterministic intent is good enough for agents. Agents don’t have jobs, families to feed, or feelings to hurt. If we interpret their intent incorrectly, so what? The consequences are losing a little productivity, or maybe getting turned off. No big deal.

I get the argument, but it’s missing a much broader point about how agents and humans coexist and relate to each other.

Agents and humans, not one or the other

The industry conversation about intent that’s happening right now is almost entirely focused on agents. This is no surprise. Agents are the new, shiny, scary thing.

We're going to have a hybrid workforce for the foreseeable future, though. Humans and AI agents are going to be working alongside each other (with overlapping access and responsibilities) for a looooooong time.

Anyone who thinks there's a hard cutover date where we stop needing to worry about humans is wrong. Humans and agents are inextricably intertwined. We can’t over-index our attention on one or the other. It has to be both.

Here's the thing: agents are actually the easier problem to solve (in some ways).

Why?

Agent reasoning is more transparent than human reasoning. Agents and their reasoning models give you chain-of-thought outputs, tool calls, API logs, and other clues throughout the course of their work and existence. Put differently, there's a paper trail of what the agent was "thinking" and doing.

The tricky and unpredictable part about agents is hallucinations. This is still a challenge despite continuous improvements to models, observability, and guardrails.

Even though they occur, we often have enough information to understand and correct what occurred based on the analyzing available data about what the agent was supposed to do and what it did.

Determining the intent of humans is much harder. You can't hook into our brains.³ Instead, intent has to be inferred from observable behavior — things like sequences of actions, timing, access patterns, expected behavior, peer norms, communication metadata, and more.

The final twist is that agents rarely act alone. We have to account for the relationships between the agents and the humans using them. That’s why I say agents and humans, not one or the other.

The meaning and risk of agents are defined entirely by the human relationships that surround them. Since agents typically operate under inherited human authority (and intent), permission and responsibility pass through the human identity to the agent.

Comprehensive security and governance means seeing not just the agent itself, but the full chain of ownership, use, delegated authority, and intent behind its actions.

All of this is exactly the opportunity we have right now with intent. If we can solve the harder problem of human intent, the problems with agents and humans using them become substantially easier.

An approach that handles both humans and agents isn't just an elegant set of words. It's the only thing that makes sense for where we're actually going with our combined human-agent workforce.

From intent to action

Intent alone is a breakthrough, but this is where I want to be really clear: it’s a mechanism, not an outcome.

With or without intent, nearly every workflow we have in security ends with action and response. We use the context we have (even if limited), apply judgment, and take action.

Historically, we’ve usually had to take action without understanding intent. If activity looks concerning, we take action and respond. We may find out later that we were wrong, but better safe than sorry.

Or, worse, if an activity doesn’t look concerning, we let it go. Security teams can’t block everything, and we’ve all learned the hard way what happens when security gets in the way of legitimate business activity.

The problem is when malicious activity slips through and we’re caught responding to an incident after it’s occurred. Intent might enter the equation at this point, but we’re already in a tough spot. It might come out in the wash during forensics, but the timing is too late to be actionable.

Actionable intent makes our security workflows much, much more adaptive.

For example, identity has always been static and reliant on permissions and credentials. What if our security evolved to make access decisions not only based on who someone is and what they’re allowed to do, but why they’re doing it?

A user who has had access to a customer list for 457 days may be a safe bet…but if they go in on day 458 and try to download 20,000 customer records, that represents a much different threat to the business than the previous 457 days. Understanding the intent of the action is critical to security going forward.

This example is the whole “looking for sequences of actions, co-occurring behaviors, and combinations that only appear together when something is actually wrong” bit from earlier.

Something like downloading 20,000 customer records might set off an alert in a reasonably sophisticated security organization with well-engineered detection rules. For others, it won’t — and now they’ve got a problem.

The dimension the probabilistic, sequences-of-actions version of intent adds is the ability to analyze a pattern of behavior and take action when something significant changes.

Adding intent into the mix alongside existing controls (fancy detection engineering or otherwise) makes the actions we take as security teams adaptive and precise.

There’s one other critical dimension intent gives us, which is proportionate response. This topic is a whole other discussion, so let’s cover it next.

Good intent, bad intent

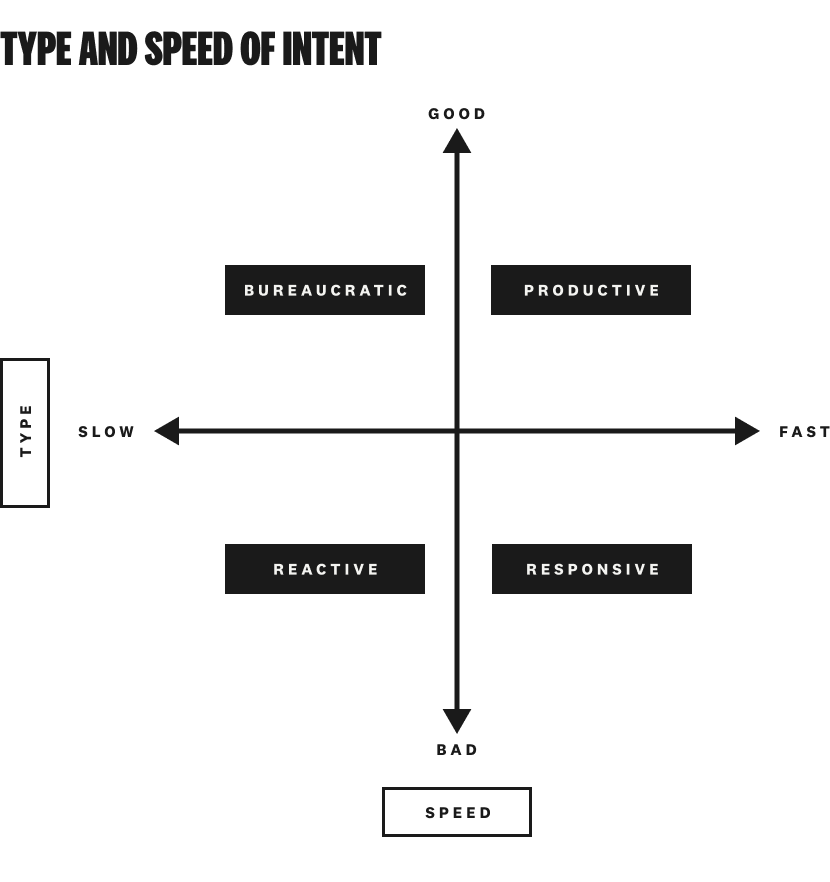

There’s an important distinction between good intent and bad intent. We don’t talk about this enough, especially the “good” part.

Both deserve a response, but the way we respond is different. The distinction matters because it unlocks what proportionate response means in practice.

Security has historically been obsessed with bad intent and relatively indifferent to good intent. My mental model looks like this:

Detecting bad intent quickly is important, but enabling good intent quickly is also important — especially in today’s business-enablement, efficiency-seeking, AI-speed work climate.

Good intent executed slowly is bureaucracy. Access requests are the canonical example of this. Speed them up with provisioning rules, JIT access, or AI, and your identity platform starts enabling productivity. Normal behavior with no concerning signals should simply be allowed to proceed (and quickly).

Bad intent (especially when paired with a sensitive action) warrants immediate responses like blocking, escalation, and investigation. Ideally. We all know things slip through the cracks, which puts us in the reactive, high MTTR danger zone.

There’s a middle case on the spectrum, too. Risky or negligent behavior paired with non-malicious intent calls for education, coaching, or a gentle nudge.

These types of behaviors often put organizations at risk just as much as intentionally malicious behavior. They also occur far more frequently than intentionally malicious activity.

Again, proportionate response delivered in-the-moment is the goal.

The point is the same surface action can warrant completely different responses depending on the intent behind it. Getting that calibration right is the difference between a positive experience with security or a bad one.

If we come down hard on someone who meant well, it breaks trust, erodes loyalty, and generates the disgruntled worker we were worried about in the first place. The wrong action on intent can create more problems downstream than the original risk ever would have.

The Chelsea Manning case is instructive here. This wasn't someone who woke up one day and decided to exfiltrate hundreds of thousands of documents. There were signals (behavioral changes, access patterns, social stressors) over an extended period.

There were multiple opportunities for proportionate action that might have changed the outcome. Instead, nothing happened until it was catastrophic. The available responses were limited at that point. Catastrophic damages required catastrophic actions.

Here’s the broader lesson with intent and action: incorrect assumptions can lead to incorrect actions. The wrong action can actually create the adversarial intent we were trying to prevent in the first place.

Without intent, security can only operate in two modes: allow or block. Intent is what makes a third mode possible: respond appropriately to what's actually happening at machine speed.

That’s why I think it’s so, so important for us to get intent right.

Intent, implemented

Let me come back to Minority Report to help explain what I think it means to get intent right.

The pre-crime association and ethical implications are worth addressing directly. Minority Report fails for specific reasons.

The precogs see a definite future with no ambiguity, no reversibility, and no chance of being wrong. The act of arrest happens before any observable evidence of wrongdoing.

More importantly, there's no human judgment in the loop. The system decides, and the decision is final.

The right implementation of intent-based security is the opposite of this in every meaningful way.

It's based on observable behavioral sequences, not prediction. The conditions that have to be met before anything escalates are defined, auditable, and defensible. AI provides context-gathering and synthesis, but humans make the final call.

What we’re talking about here isn’t pre-crime. It’s rigorous, proportionate, and adaptable security.

Instead of reacting to evidence of a breach, we can understand what's happening while there's still time to shape the outcome.

If we get this right, intent becomes part of everything we do in cybersecurity.

There are people working towards this future right now, but it's harder than it looks. It's going to take the right balance of technology and judgment to make it work.

We're still at the phase where a lot of mainstream “intent” is just words. It’s an exciting topic, for sure — but this is the danger zone for paradigm shifts.

If we over-market intent (or implement it poorly), we'll end up with another term in the buzzword graveyard right next to "zero trust" and "behavioral analytics." That would be such a shame.

We need to have the collective conviction and discipline to get intent right this time. It’s the best chance we are ever going to have.

Footnotes

¹ Minority Report is one of the earliest movies I can remember seeing in the theater.

² You can blame my time at PwC for that. If you spend enough time in client environments, you learn quickly that ill-advised ideas without a plan for implementation bring nothing but pain.

³ Not until Neuralink goes mainstream, anyway. And even if it did, there’s no way we’re going to allow organizations to tap into their employees’ minds.